Research

Current Projects

Towards Fairness-Aware Ranking by Defining Latent Groups Using Inferred Features

Group fairness in search and recommendation is drawing increasing attention in recent years. This paper explores how to define latent groups, which cannot be determined by self-contained features but must be inferred from external data sources, for fairness-aware ranking. In particular, taking the Semantic Scholar dataset released in TREC 2020 Fairness Ranking Track as a case study, we infer and extract multiple fairness related dimensions of author identity including gender and location to construct groups. Furthermore, we propose a fairness-aware re-ranking algorithm incorporating both weighted relevance and diversity of returned items for given queries. Our experimental results demonstrate that different combinations of relative weights assigned to relevance, gender, and location groups perform as expected.

Duration: Spring 2021 ; Students: Chirag Shah, Yunhe Feng, Daniel Saelid, Ke Li, Ruoyuan Gao

Explainable Recommendation Systems

The complexity of machine learning and latent factor models, such as matrix factorization or deep neural networks, makes recommendation systems less explainable. On one hand, lack of explainability makes it difficult for system designers to understand why the model fails/works so as to improve and refine the model, and on the other hand, it is also difficult for users to understand why particular items are recommended. More importantly, many recommendation systems nowadays are not only useful for information seeking, but also for complicated decision making by providing supportive information and evidence. For example, medical workers may need comprehensive health care document recommendations to make medical diagnoses, and legal practitioners need legal retrieval or case recommendation to help evaluate certain legal cases. In these tasks, explainability of the system and the results are important, so that users can understand why a particular result is relevant and how to leverage the result to take actions. Overall, the lack of good explainability weakens the transparency, effectiveness, persuasiveness, and trustworthiness of the systems, making explainable recommendation an important research issue to the community. In this project, we aim to 1) integrate knowledge base into machine learning algorithms so that the system can produce a good ranking performance as well as the knowledge-enhanced explanations; 2) generate natural language explanations for recommendations, which grants machine the ability to tell users why it made a certain decision; 3) evaluate explainable recommendations by proposing novel and concrete evaluation metrics and benchmarks.

Duration: Autumn 2020-ongoing;

Exploring Fairness in Information Retrieval Systems

How can we balance the inherent bias found in search engines and achieve a sense of fair representation while effectively maintaining a high degree of utility? We are working on addressing inherent bias found in search engine results, exploring all aspects of search engines. So far, we have explored several ranking strategies to investigate the relationship between utility and fairness within Google search and proposes an entropy based metric to measure the degree of bias, and to effectively evaluate reranking algorithms. We have developed a new framework that allows one to estimate the costs and possibilities of achieving certain level of fairness in a system, while keeping the users satisfied to some minimum limit.

Duration: Summer 2018-ongoing; Students: Ruoyuan Gao

Information Fostering

What are the problems a searcher encounters during a search process that are not matched with the affordances a search system provides? How can we derive potential problems that are present or could occur in the future while a person is searching for information? How do we map a combination of task, topic, and problems to potential help? Two of the fundamental problems for information seekers are: not being able to express their needs due to lack of understanding of the task/topic at hand, or the way a resource/system being used works; and not knowing what they do not know. Recommendations made by search systems are often limited to suggesting information objects only, and do not explore the possibilities of recommending a process/strategy, people, or other forms of suggestions. Information Fostering aims to address these problems at a fundamental level of the underlying search task that an information seeker is engaged in – by understanding the nature of that task, explicating the current and potential problems, and offering help that goes beyond what a typical search system could provide. Information Fostering is an idea of providing proactive suggestions and help to information seekers, thus avoiding potential problems and capturing promising opportunities in searching, before it is too late.

Duration: Fall 2017-ongoing; Students: Shawon Sarkar; Past Students: Jiqun Liu, Matt Mitsui

Past Projects

A Qualitative Approach to Information Retrieval Research in Chronic Illness

People are increasingly turning to the Internet to search for health information, particularly after they have been diagnosed with a chronic illness. Although many studies have evaluated how people use the Internet to interact with information, most of the research in this area cannot be generalized to adults diagnosed with chronic diseases. We do not currently understand what information patients search for, how they go about evaluating that information, and what impact the search process has on their own care or health outcomes. This study is a mixed-methods project that takes a qualitative approach to traditional interactive information retrieval research. It has three phases: a questionnaire; an interactive search session with several steps, including a think-aloud and a micro-moment time-line interview; and a follow-up interview carried out four to six months after the interactive search session. The aim of this study is to develop an understanding of the information needs, seeking, and use behaviors of patients diagnosed with chronic health conditions. This project will have a significant impact on health-related research by providing a longitudinal understanding of the impact of online health-information seeking on self-management and decision-making in patients diagnosed with chronic illness. This will in turn improve health outcomes, as engaged patients are more likely to adhere to treatments and perform self-management activities.

Duration: Fall 2017 - 2019; Students: Yiwei Wang under the guidance of Dr. Kaitlin Costello

Interactions with Healthcare Providers about Online Health Information

While many patients use the Internet for health information, the relationship between online health information seeking and communication with healthcare providers in chronic illness is not yet fully elucidated. We currently do not understand what role online health information plays in the clinical visit, the antecedent causes or health outcomes of online information seeking, or when and why online health information seeking occurs. This project takes a systematic review approach to the question of how healthcare providers and patients discuss online health information, specifically examining articles published in the domain of information behavior. Our intention with this project is to uncover gaps in the current literature in order to systematically describe the field to healthcare providers, informing their interactions with patients about online health information. This work will also uncover gaps in the literature, informing future research and intervention development.

Duration: ongoing - 2019; Students: Jeremiah King under the guidance of Dr. Kaitlin Costello

Successes and Failures in Information Seeking Episodes

People constantly face obstacles and failures in various types of information seeking episodes. These are often attributed to either the information seeker or the system/service being used for finding information. What is often ignored in such investigations is the context of the tasks that trigger information seeking or the strategies being used by the information seeker. A careful examination of the links between information seeking barriers and outcomes is needed. In this project, we look at where and why information seeking episodes succeed or fail, and how those successes/failures relate to the greater context of the tasks, the strategies/methods people employ, and the barriers/challenges they encounter.

Duration: ongoing - 2019; Students: Yiwei Wang

Information Seeking Intentions

As people become more accustomed to Web-based information retrieval, they are using online tools to address increasingly complex and personally significant queries. Current Web search engines, however, were developed to specifically help users find simple, typically factual information formatted as a single listed response to a basic query. These systems do not sufficiently assist the growing number of information seekers who need to engage in longer search episodes outside the one-query-one-response paradigm. Modern web search engines also do not support activities that require users to go beyond merely clicking on a search result, such as reading, evaluating, comparing, and using information. Our research addresses this problem by studying why people engage in such complex information seeking (i.e. their motivations), and what they try to accomplish during the course of an information seeking episode (i.e. their search intentions). Through this study, we would ultimately like to design and evaluate new types of search engines that help users accomplish their information seeking goals. In essence, we hope to develop personalized systems to support specific searchers, their goals, and their respective contexts. Specifically, this research will establish relationships between people's behaviors during an information seeking episode, the motivating goals that led them to engage in information seeking, and their specific intentions at any point during an information seeking task. This will enable the development of systems that can predict how to best assist individuals with their unique queries. As a practical example, this project’s findings could help build a system that automatically recognizes that a user is shopping for a car, then help that person compare cost-benefits of new vs. used cars, compare buying vs. leasing options, and ultimately make an informed decision. This research will be integrated with educational activities via the development of modules to supplement courses in iSchools, library/information science programs, etc. This is particularly important, as it will allow a broad range of students to learn about new searching methods and related user studies and evaluation.

Duration: Summer 2015 - 2019; Students: Matt Mitsui, Soumik Mandal

NeuIR

NeuIR (pronounced “New IR”) Group is focused on solving problems at the intersection of Deep Neural Network, Big Data, and Multimedia Information Retrieval. We understand the importance of different forms of data (text, speech, images, sensor data), and strive to achieve the following research goals:

- a) Exploring different deep learning techniques in an effort to advance artificial intelligence in information retrieval;

- (b) Identifying challenges in handling big data and developing best practices to solve these problems;

- (c) Developing newer retrieval models and evaluation metrics specific to conversational information retrieval.

Duration: Summer 2018 - 2019; Students: Souvick Ghosh, Jonathan Pulliza

Question Quality in Educational Q&A

Community Question Answering (CQA) sites have heralded as the place for Web users to facilitate information exchange and interactions with peers to get crowdsourced answers to the questions asked by members present on the site. However, it has been seen quite frequently where these venues of interaction have transformed into "informal academic environment" or "knowledge hubs." In many scenarios, the CQA sites have been renamed and retailored as the habitats that provide online users a quintessential "virtual classroom" environment to satisfy their information needs. Therefore, there exists a compelling need to assess the content present in the CQA. The content found in the CQA needs to meet a high standard to provide sufficient and accurate content that could help in providing user-satisfaction. The presence of "bad" content not just leads to decrease in the user-traffic but also impedes in the learning process of the online learners. It is very critical to evaluate and tease out the reasons that make content "bad" in nature. By fixing the bad content, the CQA sites would provide a learning opportunity for the students to better phrase the questions to satisfy the information need of the users in the future. This problem can be mainly applied to the educational context which will guide interdisciplinary research that bridges Information Science, and Learning Sciences.

Duration: Fall 2016 - 2019; Students: Manasa Rath

Google Analytics Project

Brainly.com is a social learning community for students and educators that encourages collaborative learning and sharing of knowledge in different academic subject areas. It has both registered users and unregistered visitors. The online activities of all its users are logged in a cloud bigdata data-warehouse. Desalegn works with the Brainly team on the descriptive analytics of the online user behavior in relation to the different Community Question Answering (CQA) and website metrics such as subject areas, session duration, bounce rate, etc. After analyzing the user behavior he will be working on predictive analytics based on the historical data using machine learning and deep learning.

Duration: ongoing; Lab Member: Desalegn Biru

UN Armed Conflict Project

The goal of this project is to analyze the armed conflict data compiled by the UN since World War I. Two goals drive this study. In the short term, researchers hope to predict the duration of ongoing conflicts in the Middle East, including those in Syria, Egypt, and Iran. In the long term, researchers hope to identify regions that are prone to war—whether it be civil or between states—and predict their involvement in future conflicts.

Students: Soumik Mandal, Kevin Albertson

UN General Assembly Resolutions and Voting Patterns

This project examines how we can make informed predictions in voting behavior for the UN General Assembly (GA). It analyzes text from thousands of UN official documents as well as GA voting records to find clusters based on topics, similar voting patterns, etc. Questions to be answered include:

What are the characteristics of GA documents?

How are documents adopted by UN Member States?

Which UN Member States have similar voting patterns?

How do UN Member States vote on particular topics?

Students: Kevin Albertson, Soumik Mandal, Jonathan Pulliza

Global Climate Land Energy Water Strategies (CLEWS)

The United Nations CLEWS model provides useful insights about the relationships between water, energy, climate, and land and material use on a global scale. It tries to achieve Sustainable Development Goals (SDGs) through synergizing primary energy sources.

The InfoSeeking team has been exploring the existing optimization and information visualization tools for energy planning. While most of the existing models concentrate on the economic implications of alternative sources of energy and their correlation with global temperature and emission levels, very few have concentrated on the human factors involved with energy usage. This project focuses on uncovering the various microlevel and macrolevel human factors to build a robust model that could better achieve global energy goals.

Students: Souvick Ghosh, Jiqun Liu, SeoYoon Sung, Yiwei Wang

Learning in search:an exploratory field study

In this user study, we investigate information seeking as a learning process. We present the participants with learning-related search tasks, which has been designed to represent differently cognitive levels of learning. This research also examines how broadening the users' scope of information sources (e.g. web search, offline seeking, crowdsourcing) may influence their search behavior and learning outcomes. Through the analysis of both users' web log data and the field reports, we attempt to capture how the users may seek information both online and offline, as well as how the users may exhibit various behavioral patterns while completing each task according to the levels of cognitive complexity. This field study, conducted over a period of two weeks may reveal insights with regards to users' learning and information seeking processes.

Duration: ongoing; Students: Manasa Rath, Souvick Ghosh

Retrieving People: Identifying Potential Answerers in Community Question-Answering

Community Question-Answering (CQA) sites have become popular venues where people can ask questions, seek information or share knowledge with the community. While responses on CQA sites are obviously slower than information retrieval by a search engine, one of the most frustrating aspects for an asker is if the question posted does not receive a reasonable answer or remains unanswered. CQA sites could improve the user's experience by identifying potential answerers and routing appropriate questions to them. Finding potential answerers increases the chance that the question is answered or answered more quickly. In this project, we predict the potential answerers based on question content and user profiles. Our approach builds user profiles based on past activity. When a new question is posted, the proposed method computes scores between the question with all user profiles to find the potential answerers.

Duration: Summer 2015-ongoing; Students: Long Le

Collaborative Information Seeking in Student Group Projects

To gain a better understanding of the collaborative information seeking behaviors of students conducting authentic group work projects, an exploratory study was conducted with college students working on a for-credit group project using a collaborative search system. Quantitative and qualitative data was gathered on the everyday search practices of students over the course of an authentic group project assignment, along with quality scores for sources based on a rating rubric. Results showed that student activities during collaborative information seeking relate to the quality of their search outcomes, as the effective and efficient searchers found better quality sources. Students’ pre-task attitudes and experiences toward group work also relate to the quality of their search outcomes. These findings may be useful to researchers designing and studying the effectives of collaborative search tools, and to instructors planning to incorporate group projects into their classes.

Duration: Fall 2014-ongoing; Students: Chris Leeder

Retrieving Rising Stars in Focused Community Question-Answering

In Community Question Answering (CQA) forums, there is typically a small fraction of users who provide high-quality posts and earn a very high reputation status from the community. These top contributors are critical to the community since they drive the development of the site and attract traffic from Internet users. Identifying these individuals could be highly valuable, but this is not an easy task. Unlike publication or social networks, most CQA sites lack information regarding peers, friends, or collaborators, which can be an important indicator signaling future success or performance. In this project, we attempt to perform this analysis by extracting different sets of features to predict future contribution.

Duration: Summer 2015-ongoing; Students: Long Le

Prediction and Recommendation in Exploratory Search

Analyzing and modeling users' online search behaviors when conducting exploratory search tasks could be instrumental in discovering search behavior patterns to then assist users in reaching their search task goals. We propose a framework for evaluating exploratory search based on implicit features and user search action sequences extracted from the transactional log data to model different aspects of exploratory search namely uncertainty, creativity, exploration, and knowledge discovery. We show the effectiveness of the proposed framework by demonstrating how it can be used to understand and evaluate the user search performance and thereby make meaningful recommendations to improve the overall search performance of users. We used data collected from a user study consisting of 18 users conducting an exploratory search task for two sessions with two different topics in the experimental analysis. With this analysis we show that we can effectively model their behavior using the implicit features to predict the future performance level of the user with above 70\% accuracy in most cases. Further, using simulations we demonstrate that our search process based recommendations improve the search performance of low performing users over time and validate such using both qualitative and quantitative approaches.

Duration: Summer 2014-ongoing; Students: Chathra Hendahewa

Sensor-Aware Information Seeking Behavior

To what extent, if any, do behaviors observable via smart-phones relate to information behaviors? To what extent, if any, do behaviors observable via wearable devices relate to information behaviors? To what extent, if any, are personal signals and social signals different in terms of studying behaviors? These are the types of questions we are investigating in research that uniquely combines studies of fundamental human behaviors and information seeking using mobile and wearable technologies.

Through the data and sensors available on mobile phones, we can observe individual's behavior as follows:

Social Interaction: Number of phone calls and/or SMS, Number of contacts for calls/SMS, etc.

Geolocational Movement: Number of cell towers that the phone has been connected with during a particular time period.

Wearable devices are more focusing on health-related signal from the users.

Physical Activities: Number of steps, Time of working out, Time of sleep, etc.

Duration: Spring 2015-ongoing; Students: Dongho Choi, Serife Uzun

Question Difficulty

The primary objective of this research was to propose a method which could categorize questions based on their difficulty level in Community Question-Answering sites (CQA). The problem investigated in this research is significant as it also lends its applicability in categorizing questions based on grade-level. For this project, Long worked on Biology and Physics questions posted on the Educational Q&A site, Brainly. This research can be integrated with user-profile matching and question recommendation process in CQA sites.

Duration: ---; Students: Long Le, Manasa Rath

Live User Study for Exploratory Search

We worked on designing and carrying out a new user study involving individual users conducting exploratory search tasks in a live setting during Summer 2014. The study instructed participants to install Coagmento toolbar and sidebar integrated into a browser add-on in their computers in order for us to capture transactional log data while the participants surfed the Web for collecting information that will help them to accomplish an exploratory search task. The participants were asked to work on the same task for 1-2 hours on two different topics based on their preference from a list of five topics (Health, Technology, Environment, Art, Entertainment). We also gave pre and post quiz questions to evaluate the prior and acquired knowledge levels of the users on the topic of preference. The study was able to recruit 18 individual participants. Each participant was compensated with Amazon gift cards upon successful completion of both sessions. The data collected was analyzed to build prediction models for search processes. The data was also used to provide search trail recommendations and query trail suggestions to improve diversity/relevance and information coverage of users.

Duration: Summer 2014-Fall 2014; Students: Chathra Hendahewa, Matt Mitsui

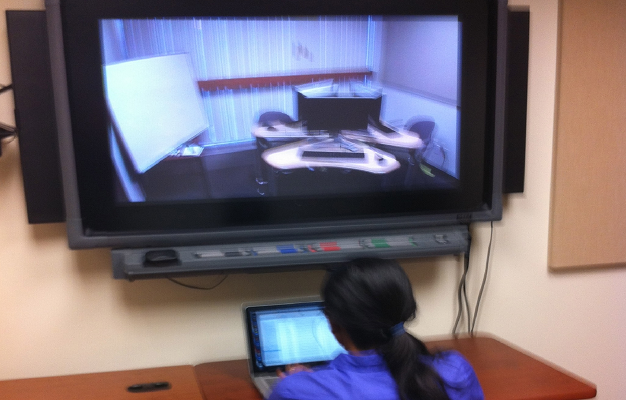

Individuals vs. Dyads vs. Triads for Informational Tasks

During Spring and Summer 2013, we conducted a laboratory study to investigate how people search information online. This study required a team of two/three or individuals for two sessions. For this study, we targeted to recruit approximately 60 participants and we were able to actually surpass this by recruiting a total of 70. The study used Coagmento toolbar and sidebar integrated into a browser to capture data while the participants surfed the Web for collecting information that will help them to accomplish a task. The first task was an exploratory search task to write a report with a 30 minutes time limit. The second task was a fact-finding task where the participants had to answer multiple questions requiring short answers with a 40 minutes time limit. Each participant was compensated with cash. We recruited participants with the following criteria:

From this study, we collected search log data, video and audio data, mouse movements and screen captures of all the sessions. In addition, we got user feedback from short interviews.

Duration: Summer 2013-Summer 2014; Students: Chathra Hendahewa, Roberto Gonzalez-Ibanez

Investigating Features that Contribute to Increasing Question Quality in Social Q&A

The study will examine what features contribute to creating a quality question within the SQA platform, Yahoo! Answers. Specifically, the study will examine how textual features, such as answer length and TF/IDF frequency, contribute to determining question quality, using non-textual features derived using human assessors as a quality standard. In addition, if a question is of poor quality, we will also ask why this is, by asking assessors to rate question quality along a series of factors, such as clarity and complexity, and mapping textual features to these factors. By taking this approach, we hope to derive an automated model for question quality that can not only predict question quality, but also provide reasons why a question is of poor quality, which will yield suggestions for how to improve the question.

Duration: Fall 2011-Spring 2013; Students: Erik Choi, Vanessa Kitzie

User Search Process Segmentation for Understanding Underlying Search Strategies

Analyzing different user search processes in achieving different search goals is vital in understanding the underlying strategies, which they adopt in performing such tasks. Another crucial aspect is that based on different information seeking models in the literature such as Information Search Process model, Anomalous State of Knowledge model, Behavioral Model for Information Seeking it is evident that a user search processes involve many stages from inception to finally reaching the goal. In this project, we aim to segment the user search processes which are composed of a time series of search related actions using a machine-learning based approach to analyze whether the segmentation leads to dividing the overall search process in to stages where users take different inter stage strategies in order to optimize the final search performance.

Duration: Summer 2013-Spring 2014; Students: Chathra Hendahewa

Investigating Motivations and Expectations of Asking a Question in Social Q&A

Social Q&A (SQA) has rapidly grown in popularity, impacting people’s information seeking behavior. Although research has paid much attention to a variety of characteristics within SQA to investigate how people seek and share information, fundamental questions of user motivations and expectations for information seeking behaviors in SQA remain: Why do people use SQA to ask a question? What are the users’ expectations with respect to the responses to their questions? The current study applied the theoretical framework of uses and gratification to investigate the motivations for SQA use, and adapted criteria people employ to evaluate information from previous literature in order to investigate expectations with regard to evaluation of content within SQA.

Duration: Summer 2013-Spring 2014; Students: Erik Choi

Questioning the Question – Addressing the Answerability of Questions in Community Question-Answering

Most research on community question-answering (cQA) services focuses on answer ranking, retrieval, and assessment with little attention given to question quality. A drawback to these studies is their assumption that the questions asked are of suitable quality to potentially receive a good answer in the first place. Yet evidence indicates this is not the case and that question type and content affect the number and quality of answers received. In this research project, Dr. Chirag Shah, Erik, and Vanessa focus on questions posted on Yahoo! Answers to investigate what factors contribute to the goodness of a question and determine if we can identify bad questions in order to allow askers to revise them before posting.

Duration: Spring 2012-Fall 2014; Students: Erik Choi, Vanessa Kitzie

Investigating Implications of Positive and Negative Affects in Collaborative Information Seeking

This research focuses on the study of positive and negative affects in the information search process of both individuals and teams. Two important questions addressed in this study are: (1) Do we feel a certain way because we find (don't find) relevant information? and (2) Do we find (or don't find) relevant information because we feel a certain way?. Theories and models from social psychology suggest that both positive and negative emotions have important implications in the way we perform daily activities such as team work, learning, social interactions, and decision making, to name a few. To what extent positive and negative affects (as well as related affective processes) influence the way in which both individuals and teams search, assess, and use information?

We designed and conducted a lab study involving 142 participants with specific experimental conditions to evaluate the effects of affective processes in the information search process of both individuals and teams. From each participant, multimodal data (including electrodermal activity, facial expressions and gestures, eyetracking data, users' actions, communication logs, and browsing logs, among others) was collected.

Duration: Spring 2012-Fall 2014; Students: Roberto Gonzalez-Ibanez

Support for Online Synchronous Collaborative Information Seeking

This research aims to investigate effects of various situations and support mechanisms, defined by participants’ social and emotional interactions, for people working on online collaborative information seeking/searching tasks synchronously. We created Coagmento system and mobile apps for supporting this.

Duration: Fall 2010-Fall 2011; Students: Roberto Gonzalez-Ibanez

Measuring Content Quality in Online Q&A

The primary objective of this research is to propose and evaluate a model of content quality in online Q&A environments, which include expert-based Q&A services such as IPL and Ask-a-Librarian, as well as social/community Q&A services such as Yahoo! Answers and WikiAnswers. Vanessa worked on this as a part of the OCLC/ALISE research grant award for 2011. Read more on Social Information Seeking here.

Duration: Fall 2010-Spring 2012; Students: Vanessa Kitzie

Investigating Failed Questions in Social Q&A

This research focuses on identifying the statistical characteristics of both the content of the failed question and information of asker who raises this question to see if this set of characteristics makes failed question statistical significantly different from well-answered question. Additionally, we worked on comparing the prediction model built upon the statistical characteristics to a recent qualitative analysis which has been focusing on constructing human’s criteria for evaluating question’s likelihood for failure in order to study how different is a machine prediction model from human judgment.

Duration: Fall 2010-Spring 2012; Students: Erik Choi, Vanessa Kitzie

Query Formulation and Search Strategies

This research aims to investigate (1) effects of query suggestions feature provided by most major search engines, as well as (2) the effects of nature of the search task – whether it is for simply collecting information, or using that collected information for a higher purpose. There were three conditions: (1) Google Instant, (2) Query Suggestions only, (3) Basic Search Interface and participants were randomly selected to use one of them for the two tasks they were required to complete. Abhijna and Kanan conducted a lab study for this during Fall 2010. They presented the findings in the undergraduate Aresty research symposium as well as at Research Day right here at SC&I. They also won an award for outstanding research in information technology after being nominated by Professor Shah.

Duration: Fall 2010-Spring 2011; Students: Abhijna Baddi, Kanan Parikh

Use of Facebook in Understanding Political Discourse

It is not uncommon to use social media/networking services such as Facebook to understand people's opinions and sentiments on a social-political issue. We collected and analyzed data from Facebook for several topics of socio-political importance.

Duration: Fall 2010-Spring 2011; Students: Charlie File, Tayebeh Yazdani nina

Identifying Roles of Participants in Collaborative Projects

In collaborative projects, often roles (e.g., leader and follower) emerge as the participants work through it. We looked at the relation of such roles to outcomes of the collaboration.

Duration: Fall 2010-Spring 2011; Students: Daulat Lakhani