FATE project – What is it about and why does it matter?

FATE is our InfoSeeking Lab’s one of the most active projects. It stands for Fairness Accountability Transparency Ethics. FATE aims to address bias found in search engines like Google and discover ways to de-bias information presented to the end-user while maintaining a high degree of utility.

Why does it matter?

There are many pieces of evidence in the past where search algorithms reinforce bias assumptions toward a certain group of people. Below are some past examples of search bias related to the Black community.

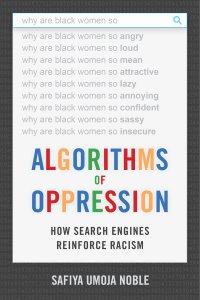

Search engines suggested unpleasant words on Black women. The algorithm recommended words like ‘angry’, ‘loud’, ‘mean’, or ‘attractive’. These auto-completions reinforced bias assumptions toward Black women.

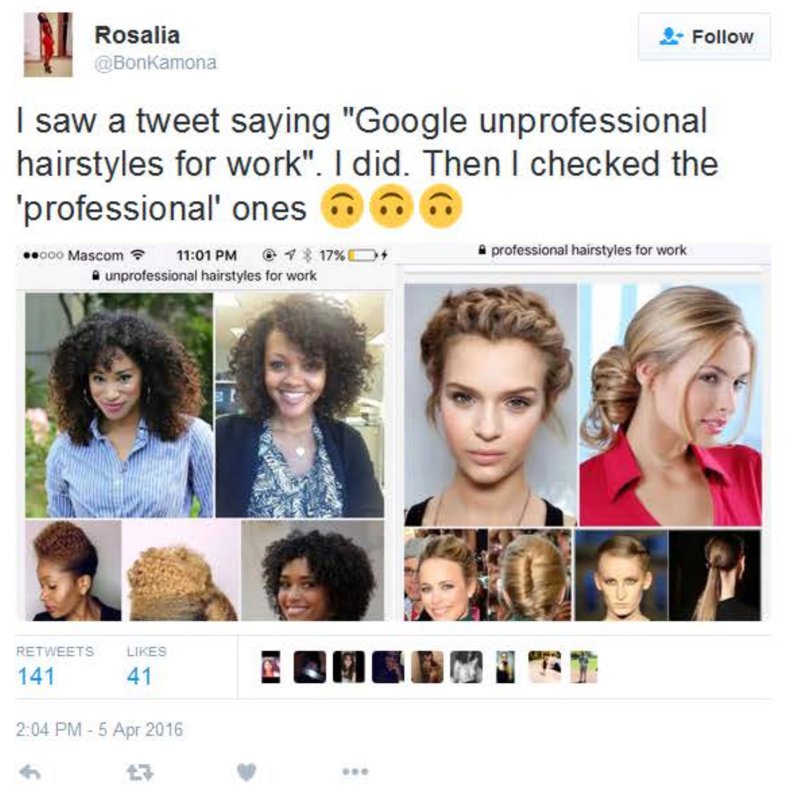

Search results show images of Black’s natural hair as unprofessional while showing images of white Americans’ straight hair as professional hairstyles for work.

Search results on “three black teenagers” were represented by mug shots of Black teens while the results of “three white teenagers” were represented by images of smiling and happy white teenagers.

These are some issues that were around for many years until someone uncovered them, which then sparked changes to solve these problems.

At FATE, we aim to address these issues and find ways to bring fairness when seeking information.

If you want to learn more about what we do or get updates on our latest findings, check out our FATE website.